In 1997, after Gary Kasparov lost his historic chess match to IBM’s Deep Blue, he didn’t just walk away or rail against the machine. Instead, he started a new kind of competition called “Advanced Chess.” In these matches, a human player and a computer worked together as a team—a “Centaur.”

What happened next was quite unexpected. Amateur players with midrange computers often beat grandmasters and higher end chess computers. They knew when to listen to the machine and when to override it. They used the computer to explore possibilities, but they used their human judgment to make the final call.

In other words, the most powerful force wasn’t the smartest machine but the best collaboration.

Today, we are at a similar crossroads with Artificial Intelligence. We’ve built the machines, but we haven’t quite figured out how to be Centaurs. And that might be why AI adoption is stalling.

The Diffusion Mystery

If you look at the headlines, AI is taking over the world. But if you look at the data, the picture is more complicated.

Everett Rogers, the legendary sociologist who gave us the “Diffusion of Innovations” theory, taught us that technology doesn’t spread because it’s better. It spreads because it fits into our lives, our norms, and our trust networks. Right now, AI has a fit problem.

According to McKinsey’s 2025 global research, while almost every company is playing with AI, very few have successfully scaled it. The problem might be the kinds of problems we are trying to solve with AI. It’s not that the technology is too complex, it’s that we’re trying to use a “tame” solution for a “wicked” world.

Tame Tasks vs. Wicked Problems

In the 1970s, design theorists Horst Rittel and Melvin Webber identified two types of challenges:

- Tame Problems: These have a clear goal and a clear stopping rule. Think of a puzzle or a math equation. Coding is often a tame problem. You write the script, you run the test, and it either works or it doesn’t. This is why AI adoption has worked quite well for developers.

- Wicked Problems: These are messy. They have no clear definition and no right answer, only “better” or “worse” ones. Moreover, every time you try to solve a wicked problem, the problem changes. Think of education, healthcare, or leading a team.

When we try to use AI to solve a wicked problem through pure automation, we fail because wicked problems require judgment, and good judgment requires something else.

Turbulent Fields

Systems theorist Eric Trist called the environment we live in today a “turbulent field.” Imagine trying to play a game of soccer, but the grass is moving, the goals are shifting, and the other team keeps changing the rules. That’s turbulence. And turbulence creates wicked problems.

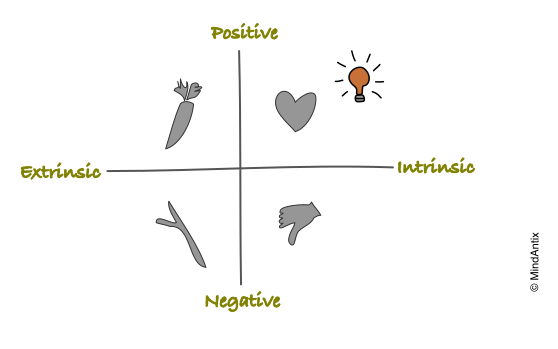

In a stable world, you can rely on data and optimization. But in a turbulent world more data often leads to more confusion. Instead of data, you need a North Star that can simplify the number of variables you need to optimize for. Trist argued that values are effective North Stars in solving such complex problems. They clarify direction by eliminating options that don’t fit within those values.

This might be one reason why solving problems with AI is so challenging. Without clearly defined values, AI becomes a black box that’s hard to trust.

Designing with Values

If we want AI to actually work for us, we have to stop designing for automation and start designing for human flourishing.

This brings us to one of the most important frameworks in social science that I have seen to be highly effective: Self-Determination Theory (SDT). For people to be at their best, they need three things:

- Autonomy: The desire to be the author of our work and lives.

- Mastery (or Competence): The urge to learn new things and get better at skills that matter.

- Purpose (or Relatedness): The yearning to do what we do in the service of something larger than ourselves.

The “Automation Trap” kills all three. If an AI writes your entire report, you lose your autonomy (you’re just a spectator). You lose your mastery (your skills begin to atrophy). And eventually, you lose your sense of purpose.

This is the “Irony of Automation.” As researcher Lisanne Bainbridge pointed out, the more we automate, the more we rely on humans to handle the rare, high-stakes crises. But if the human has been sidelined by the automation, they no longer have the skills to save the day when the machine fails.

Nowhere is this tension clearer than in the classroom. If a student uses AI to generate an essay, the task is finished, but the learning never happened.

Learning requires productive struggle. Elizabeth and Robert Bjork’s research on “desirable difficulties” shows that we learn best when the process feels a little bit hard. When we remove the struggle, we remove the growth.

If we want AI to diffuse in education, and for that matter, in any knowledge-work field, we have to move from “Answer Engines” to “Thought Partners.”

A New Blueprint for the AI Collaborator

So, what does a value-driven, human-centered AI look like? It follows a different set of design principles:

1. Values Over Vibes

Wicked problems are resolved by making choices based on what we value most. An AI collaborator shouldn’t hide these choices. It should surface them. Instead of saying “Here is the best strategy,” it should say “If you value speed, do X; if you value employee well-being, do Y.”

2. Design for Mastery

Success shouldn’t just be measured by task completion. It should be measured by capability gained. Does this AI help the user understand the problem better? Does it challenge their assumptions? A great AI should function like a coach, nudging the user to do their best thinking rather than doing the thinking for them.

3. Human Stewardship

In a turbulent field, the “correct” answer is often a conversation. AI can widen our options and test our scenarios, but humans must steward the meaning. We are the ones who decide which values are important and trade-offs are worth making.

The Question for 2026

As we stare down 2026, we need to stop asking, “What can AI do?” and start asking, “What values should do the steering?”

For the last two years, we’ve been obsessed with technical possibilities. We’ve treated AI like a new engine and spent all our time seeing how fast it can go. But in a turbulent field, speed without a North Star is just a faster way to get lost. If we continue to design simply because a solution is possible, we will keep falling into the Automation Trap.

The truth is, technological possibility should never precede moral clarity. In the era of wicked problems, the right answer doesn’t exist in the data; it exists in our intentions. If we want to move from “Answer Engines” to true Centaur-style collaboration, we have to identify the values we are designing for before we write a single line of code.

The real lesson of Gary Kasparov’s Centaurs wasn’t that they had better computers. It was that they had a better process rooted in human judgment. In the long run, the real competitive advantage won’t be the machine’s speed. It will be our wisdom.